“As to methods, there may be a million and then some, but principles are few. The man who grasps principles can successfully select his own methods. The man who tries methods, ignoring principles, is sure to have trouble.”

— Harrington Emerson

Most product managers copy. Very few actually think.

Someone ships infinite scroll and ten teams add it to their app. Notion launches AI writing and suddenly every SaaS has a sparkle icon in the corner. You’ve seen this. You’ve probably done it. I’ve done it too.

First principles thinking is the opposite of that instinct. It’s a way of solving problems where you break the situation down into its real parts, then rebuild. Not copy, not analogy. Just the raw atoms of the problem.

In this post, I’ll show you exactly how to apply first principles as a product manager, with very recent examples from companies shipping right now. Cursor, DeepSeek, Perplexity. Not war stories from a 2010 Harvard case study.

Let’s start with the example everyone uses, for a reason.

The Elon story, but updated

In the early 2000s, Elon Musk wanted to send humans to Mars. He started calling rocket-building agencies to figure out what it would cost. The quotes came back at up to $65 million per rocket.

He did something most people don’t. He broke the rocket down into its actual physical ingredients. Aerospace-grade aluminum alloys, titanium, copper, and carbon fiber. Then he walked to commodity markets and priced those same materials. The answer: around 2% of the quoted rocket price.

That gap became SpaceX.

Fast forward to today. Starship is lifting more payload per dollar than anything ever built, and NASA is paying SpaceX to take astronauts to the Moon using the same first principles cost model Musk started with in 2002. The rocket itself is different. The thinking is identical.

So here’s the thing. You don’t need to build rockets to use this. You just need to stop accepting what you’re told and start asking what’s actually true.

What first principles actually means (in plain English)

Break the thing into its real parts. Reassemble them. Question the assumptions underneath.

That’s it. That’s the whole philosophy.

Three habits make this possible:

- Break systems down into their real components. Not the labels people use, the actual moving pieces.

- Combine parts across systems to create something new and valuable.

- Question analogies. Especially the confident ones everyone repeats at conferences.

Now let’s get into the product management part.

Why every PM job description asks for this

Look at any well-written PM job description. You’ll see “first principles thinker” in the requirements pretty much every time. There’s a reason.

A PM who thinks from analogy gives you copies. A PM who thinks from first principles gives you category leaders.

That’s the whole difference.

Analogy vs first principles: the hidden cost

When a junior PM gets a product teardown exercise, their first instinct is UI. Move this button. Shrink that banner. Change the color. They’re reasoning from analogy. They’ve seen other apps that look nicer, so they want to make this one look nicer too.

A seasoned PM opens with different questions. Who is the user? What job are they hiring this product to do? What’s the actual product strategy? Only after answering those do they touch the interface.

The difference isn’t skill. It’s the layer they’re thinking at.

Analogy gets you incremental. Sometimes that’s fine. But if your competitor shipped the feature you’re about to clone three months ago, you’re now three months behind trying to catch up with their second version. That’s a bad place to be.

Case study 1: Cursor and the IDE nobody thought to rebuild

Every major code editor in 2022 was basically the same thing. A text editor with some smart autocomplete bolted on. GitHub Copilot was the best version of that. Add AI suggestions to the side of your existing editor and you’re done.

Four MIT classmates at a company called Anysphere asked a different question. What would a code editor look like if the AI was the core, and the editor was built around it?

Not AI plus editor. Editor designed for AI.

They forked Visual Studio Code so developers wouldn’t have to learn a new interface. But behind that familiar shell, they rebuilt how the whole thing works. Instead of feeding the AI one file at a time, they indexed the entire codebase. The model could now reason about imports and dependencies across dozens of files at once.

Copilot users were getting autocomplete. Cursor users were getting a coding partner that understood the whole project.

Here’s what happened next. By January 2025 they hit $100M ARR. By end of 2025 they crossed $1B. By Q1 2026 they doubled again to $2B. Their November 2025 round valued the company at $29.3 billion, with Cursor now reportedly used inside over half the Fortune 500.

All because they refused to treat “add AI to existing editor” as the default path.

The PM lesson: When a new capability shows up (LLMs, real-time sync, on-device ML, whatever), most teams plug it into their existing product. The ones who win ask what the product should have looked like if this capability had always existed.

Pick the right problem before you touch the solution

First principles forces you deeper into the problem before you let yourself think about the solution. You keep double-clicking until you hit the real thing.

The easiest tool for this is the Five Whys framework. Not a fancy tool. Just ask “why” five times when a metric dips or someone demands a feature.

Example I use in HelloPM cohorts. Someone says “our activation is low, let’s build onboarding videos.”

- Why is activation low? Users aren’t completing signup.

- Why? They drop at step 3, the payment page.

- Why? They don’t know what they’re paying for yet.

- Why? Product value isn’t clear before payment.

- Why? We put the paywall too early in the flow.

The answer was never “build onboarding videos.” The answer was “move the paywall.” Videos would have burned a full sprint and moved activation by maybe 2%. Moving the paywall moved it by 40%.

Frameworks like Jobs To Be Done and Fishbone Diagrams work on the same principle. Break the problem into its root causes before picking the solution.

Try this the next time a stakeholder asks you for a feature. Ask these four questions before you touch a PRD:

- What is the user actually trying to accomplish?

- What’s stopping them today?

- What have they already tried, and why didn’t it work?

- If I solved the root cause, would they still need the feature being requested?

If the answer to the last question is “probably not,” you just saved your team a quarter of work.

Form vs function: the flying car problem

People love asking “where are the flying cars?”

The answer is we’ve had them for 120 years. They’re called planes. We got so attached to the form (a car that flies) that we missed the function (traveling through the air). Function was solved. Form didn’t match the sci-fi picture, so people think the problem is still open.

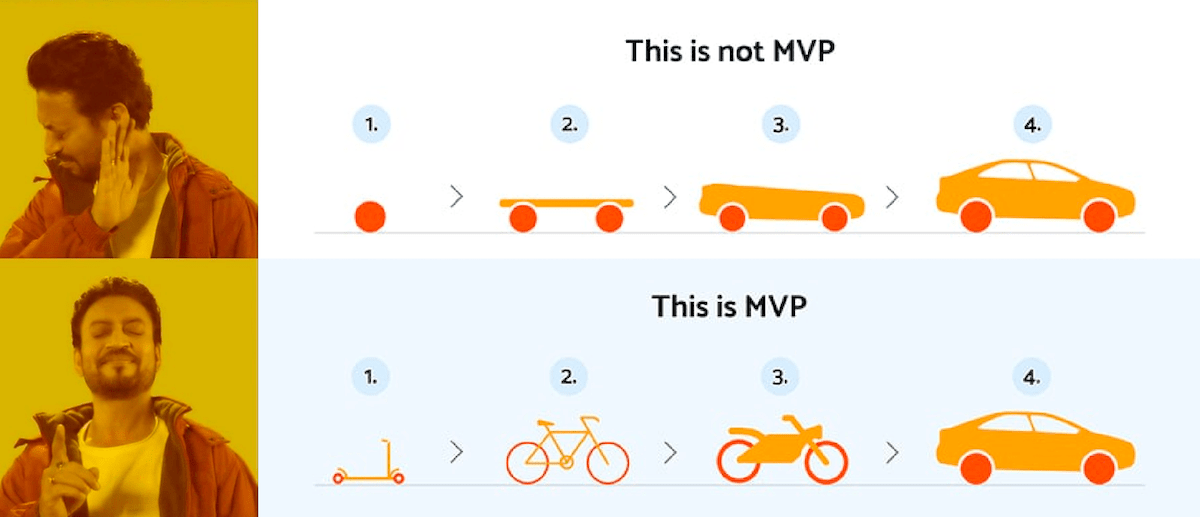

PMs fall into this trap constantly, especially when designing MVPs. You see what a finished product looks like, so you try to build a smaller version of that shape. But shape isn’t what users pay for. Function is.

A good MVP is ugly. It solves the core job badly enough to test whether people want it. That’s the whole point. A polished MVP is just an expensive, slow MVP.

The drawing below still applies. If the function is “get from A to B,” your MVP is a skateboard, not a quarter of a car.

Learned something useful so far? Show us some love on twitter → Tweet Now ←

Case study 2: DeepSeek and the $5 million question

By the middle of 2024, training a frontier AI model had become a game for companies with billion-dollar compute budgets. GPT-4 class models were rumored to cost $100M+ to train. Everyone in the industry accepted this as the cost of playing.

A small Chinese lab called DeepSeek didn’t accept it. They asked the first principles question: what does it actually cost in raw GPU hours to train a model this size, and where is that money going?

They broke the cost problem down layer by layer.

They rebuilt the architecture using Mixture of Experts, so only a small fraction of the model activates per query. They switched from the usual FP16 training to FP8 precision for most operations, halving the memory footprint. They co-designed the software with the hardware, squeezing every bit of throughput out of each H800 GPU.

The result: DeepSeek-V3 trained on 2,048 H800 GPUs for about two months. Total reported training cost: roughly $5.58 million.

When they released it in January 2025, it matched or beat models that cost 10 to 20 times more. NVIDIA lost $600 billion in market cap in a single day because the entire industry’s cost assumption had just been exposed as soft.

The PM lesson: When every competitor accepts the same cost structure, there’s usually a first principles breakthrough hiding in plain sight. Somebody will find it. Might as well be you. The question to ask at your next ops review: “What cost are we treating as fixed that isn’t?”

Prioritization and the art of saying no

As a PM, you’ll get flooded with ideas. From engineering, marketing, sales, customers, your CEO’s friend from a dinner party. Most of them sound reasonable in isolation.

First principles is how you cut through this.

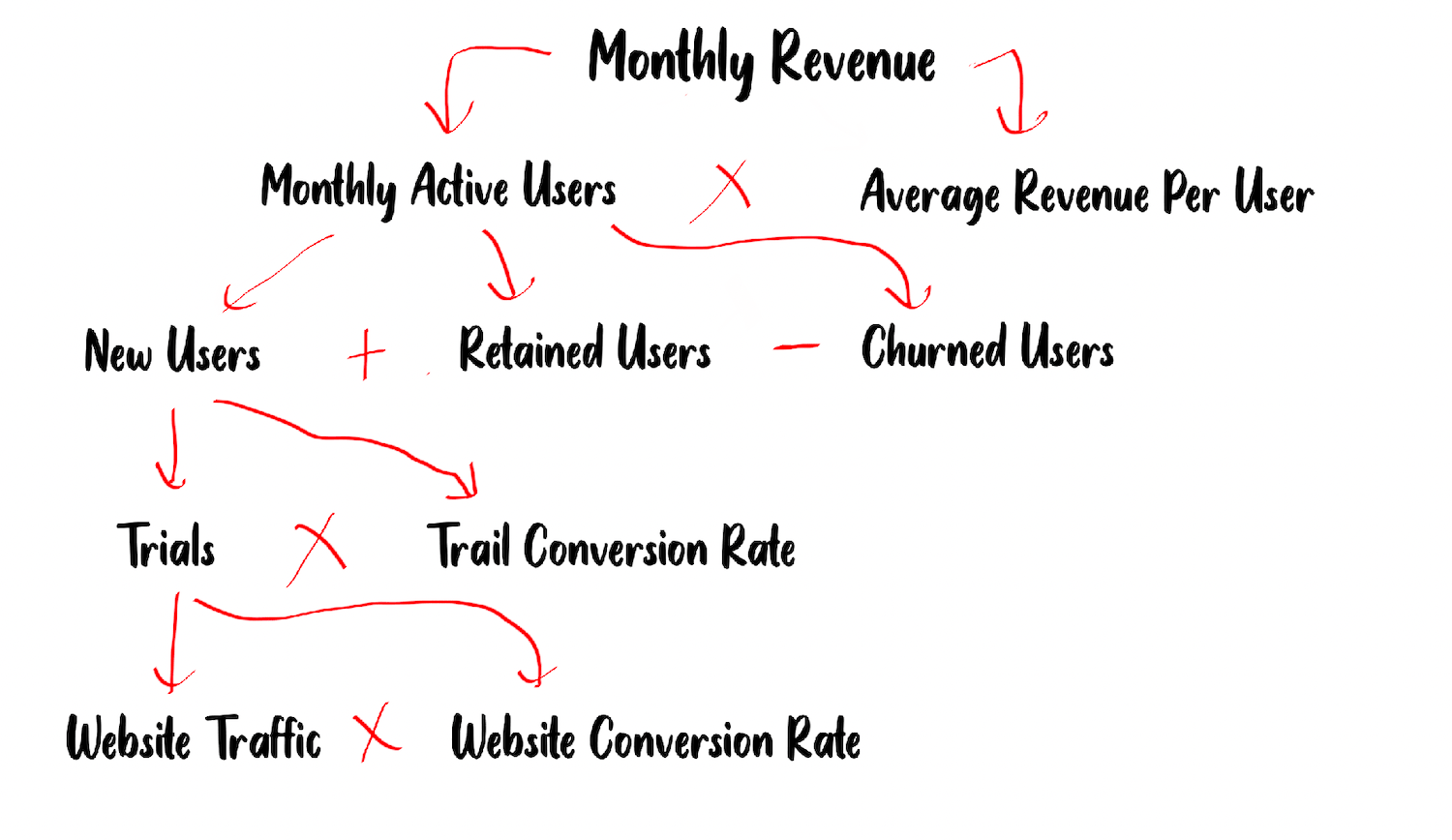

Break your key business metric into its fundamental components. For a SaaS or subscription product, revenue might look like this:

Now when an idea comes in, ask three questions. Which of these variables does this move? By how much? What’s the confidence?

If the idea doesn’t move any of them meaningfully, it’s a no. Write back to the person who proposed it. Show them the math. Nine times out of ten they’ll drop it themselves, because now it’s a reasoned conversation instead of an opinion war.

This is also how you avoid the most common PM mistake: working on the variable that’s already optimized. If your activation is 2% and your site traffic converts at 18%, you don’t need more traffic. You need to fix activation. Anyone who pitches you traffic ideas gets a polite no.

As per the breakdown above, if your website conversion rates are poor, you should work on conversion optimization before working on increasing traffic.

Killing bad ideas is one of the hardest parts of being a PM. First principles gives you the framework to do it without being the villain.

Modularity: the Bezos obsession

One of the most underrated uses of first principles is structural. When you see any product as a set of modules instead of one solid block, you open up options that didn’t exist before.

The classic example: Amazon’s internal services (compute, storage, database, queueing) were built to power their e-commerce site. Bezos famously mandated that every team expose their services via APIs, so nothing could be a black box. Years later, Amazon wrapped those same modules and sold them as AWS. That wrapping is now a $100 billion+ business that powers roughly a third of the cloud.

The Amazon team didn’t “build AWS.” They decoupled Amazon. AWS fell out.

As a PM, push for modularity in your design, operations, and engineering teams from day one. It pays off in three ways you can’t predict ahead of time:

- You can debug faster because each piece can be tested alone.

- You can create new products by recombining existing pieces instead of building from scratch.

- You can swap a module when a better version shows up, without a ground-up rewrite.

Case study 3: Perplexity’s Comet and the browser that wasn’t a browser

Here’s a story that’s playing out right now.

For 30 years, a browser has been a tabbed window for viewing web pages. Chrome, Safari, Firefox, Edge. Different brands, same bones. Adding AI to this shape meant bolting on a sidebar or a tab that opened ChatGPT. Everyone did this. Chrome added Gemini. Edge added Copilot.

Perplexity asked what a browser should look like if the AI was the primary interface and the web pages were just sources. Not “browser with AI.” AI first, browser second.

They launched Comet in July 2025. Instead of you navigating, you tell Comet what you want to accomplish. It opens tabs, reads them, compares them, summarizes them, and acts on your behalf. You think out loud. It does the browsing. By March 2026 Comet was on Android and iOS too, with Perplexity pushing hard against Chrome’s dominance.

Whether Comet wins the browser war is still an open question. Chrome and Safari still hold roughly 85% of global traffic, and changing browsers is a behavior people rarely update. But that’s not the point for us as PMs. The point is this: every major browser team was adding AI as a feature. Perplexity restructured the category by asking what the job should look like from scratch.

The PM lesson: Every incumbent in your category is probably doing the equivalent of adding AI to a sidebar. Somewhere, someone is rebuilding the whole thing around a capability you assumed was just a feature. Which side of that equation do you want to be on?

Finding the right metrics

Every PM is drowning in metrics. DAU, MAU, CAC, LTV, NPS, CES, CSAT. Pick any three letters.

Copying metrics from other companies is the metrics version of copying features. Each product has a different value engine. The metrics should come from that engine, not from the top of a TechCrunch article.

At INDmoney, the question we kept coming back to was simple: does this feature put more money in the user’s hand? Not engagement. Not sessions. Not time in app. Money. Tangible, measurable, user-level outcome.

Every feature, every experiment, every prioritization call mapped back to that fundamental question. Because we’d deconstructed the user’s real motivation for opening the app in the first place.

For your product, what’s the equivalent? A user of a meditation app doesn’t want “sessions completed.” They want to feel calmer. A Duolingo user doesn’t want “streaks.” They want to actually speak the language. A user of a hiring tool doesn’t want “applications submitted.” They want a job.

Once you have the root outcome, your metric tree builds itself. The hard work is getting to that one sentence.

“Most people use analytics the way a drunk uses a lamppost, for support rather than illumination.” — David Ogilvy

Most analytics dashboards exist to justify decisions people have already made. First principles flips this. You pick the outcome first, then build the tree of metrics that actually lead to it.

When first principles is the wrong tool

Let me be honest about something most blog posts skip. First principles thinking isn’t always the right move.

It’s expensive. It takes time, mental energy, and usually requires pushing back on stakeholders who just want an answer by EOD. For small, reversible decisions (which button color, which copy variant, which notification time), you don’t need to rebuild the world. Analogy and testing work fine, and they’re faster.

Use first principles when:

- The stakes are high and one decision shapes the next five years of the product.

- You’re entering a new category or building from scratch.

- Everyone in your industry agrees on something that feels suspicious.

- You’ve copied the best practices and still aren’t winning.

- A metric is stuck and no amount of tactical change is moving it.

Use analogy and pattern matching when:

- It’s a small UX tweak you can A/B test in a week.

- You’re picking between well-known infrastructure patterns where the trade-offs are documented.

- Speed matters more than elegance, and the decision is reversible.

The skill isn’t using first principles for everything. It’s knowing which situations deserve it.

How to actually build the habit

Reading about first principles is easy. Training your brain to work this way takes reps. Here’s what’s worked for me and for the PMs we’ve placed at Google, Amazon, Microsoft, and Indian unicorns through HelloPM.

Start small. Pick one decision a week. Spend 30 minutes writing out the assumptions you’re about to accept without questioning. Then question each one. You’ll catch two or three that don’t actually hold.

Draw the tree. When someone brings you a problem, don’t jump to solutions. Draw the problem decomposition on paper first. If you can’t draw it, you don’t understand it yet. That’s a real signal, not a minor detail.

Read founders, not pundits. First principles lives in founder memoirs and technical deep reads, not in listicles. Read how the Cursor team actually structured their editor. Read DeepSeek’s V3 paper. The abstract lesson follows the concrete story, not the other way around.

Steal one assumption every week. Once a week, pick one “everyone knows” assumption in your product and test it. Everyone knows users don’t read. Is that true for your users? Everyone knows freemium works. Does it for you? Everyone knows mobile first. Are you sure your users aren’t on desktop? You’ll be surprised how many of these break when you actually look.

Explain it to a five-year-old. If you can’t explain your problem and solution to someone with no context in simple words, you’re still thinking in your industry’s shorthand. That shorthand is where sloppy assumptions hide.

Closing thought

First principles is just a fancy name for refusing to outsource your thinking. Break it down. Reassemble it. Question what everyone else accepts.

Analogies are cheap and fast. Save them for low-stakes calls where speed wins. First principles is expensive and slow. Save it for the decisions that shape your next five years.

The PMs I’ve seen build products that actually matter all learned one skill. They stopped accepting the frame of the problem as given. They broke it open, looked at the pieces, and rebuilt.

Copy-cats ship faster. Original thinkers define categories. Which one are you building this week?

Liked this? Share it with a PM friend who keeps copying from the same five apps. → Tweet Now ←

Want to learn how modern PMs think?

Applications for our next cohort are open. Apply here ⇒ https://hellopm.co